Apple today unveiled several innovative technologies that make it easier and faster for developers to create powerful new apps. SwiftUI is a new development framework that makes building powerful user interfaces easier than ever before. ARKit 3, RealityKit and Reality Composer are advanced tools designed to make it even easier for developers to create compelling AR experiences for consumer and business apps.

Apple today unveiled several innovative technologies that make it easier and faster for developers to create powerful new apps. SwiftUI is a new development framework that makes building powerful user interfaces easier than ever before. ARKit 3, RealityKit and Reality Composer are advanced tools designed to make it even easier for developers to create compelling AR experiences for consumer and business apps.

New tools and APIs greatly simplify the process of bringing iPad apps to Mac. And updates to Core ML and Create ML allow for more powerful and streamlined on-device machine learning apps.

“The new app development technologies unveiled today make app development faster, easier and more fun for developers, and represent the future of app creation across all Apple platforms,” said Craig Federighi, Apple’s senior vice president of Software Engineering.

“SwiftUI truly transforms user interface creation by automating large portions of the process and providing real-time previews of how UI code looks and behaves in-app. We think developers are going to love it.”

SwiftUI

SwiftUI provides an extremely powerful and intuitive new user interface framework for building sophisticated app UIs. Using simple, easy-to-understand declarative code, developers can create stunning, full-featured user interfaces complete with smooth animations.

SwiftUI saves developers time by providing a huge amount of automatic functionality including interface layout, Dark Mode, Accessibility, right-to-left language support and internationalisation.

SwiftUI apps run natively and are lightning fast. And because SwiftUI is the same API built into iOS, iPadOS, macOS, watchOS and tvOS, developers can more quickly and easily build rich, native apps across all Apple platforms.

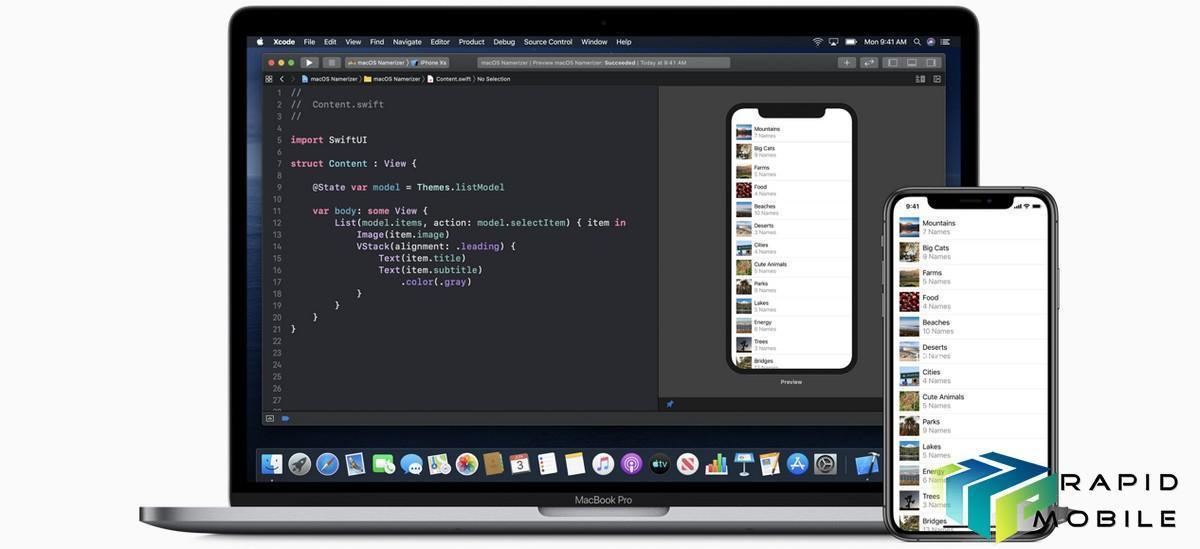

Xcode 11 and SwiftUI

A new graphical UI design tool built into Xcode 11 makes it easy for UI designers to quickly assemble a user interface with SwiftUI — without having to write any code. Swift code is automatically generated and when this code is modified, the changes to the UI instantly appear in the visual design tool.

Now developers can see automatic, real-time previews of how the UI will look and behave as they assemble, test and refine their code. The ability to fluidly move between graphical design and writing code makes UI development more efficient and makes it possible for software developers and UI designers to collaborate more closely.

Previews can run directly on connected Apple devices, including iPhone, iPad, iPod touch, Apple Watch and Apple TV, allowing developers to see how an app responds to Multi-Touch, or works with the camera and on-board sensors — live, as the interface is being built.

SwiftUI uses a declarative syntax so you can simply state what your user interface should do. For example, you can write that you want a list of items consisting of text fields, then describe alignment, font, and color for each field. Your code is simpler and easier to read than ever before, saving you time and maintenance.

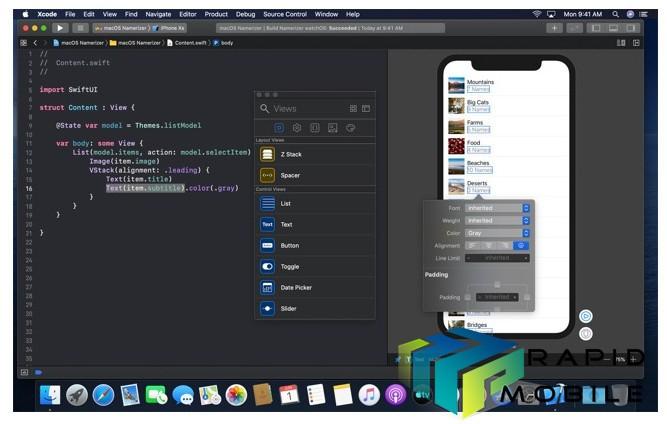

Drag and drop. Arrange components within your user interface by simply dragging controls on the canvas. Click to open an inspector to select font, color, alignment, and other design options, and easily re-arrange controls with your cursor. Many of these visual editors are also available within the code editor, so you can use inspectors to discover new modifiers for each control, even if you prefer hand-coding parts of your interface. You can also drag controls from your library and drop them on the design canvas or directly on the code.

Dynamic replacement. The Swift compiler and runtime are fully embedded throughout Xcode, so your app is constantly being built and run. The design canvas you see isn’t just an approximation of your user interface — it’s your live app. And Xcode can swap edited code directly in your live app with “dynamic replacement”, a new feature in Swift.

Previews. You can now create one or many previews of any SwiftUI views to get sample data, and configure almost anything your users might see, such as large fonts, localizations, or Dark Mode. Previews can also display your UI in any device and any orientation.

Augmented Reality

ARKit 3 puts people at the center of AR. With Motion Capture, developers can integrate people’s movement into their app, and with People Occlusion, AR content will show up naturally in front of or behind people to enable more immersive AR experiences and fun green screen-like applications.

ARKit 3 also enables the front camera to track up to three faces, as well as simultaneous front and back camera support. It also enables collaborative sessions, which make it even faster to jump into a shared AR experience.

RealityKit was built from the ground up for AR. It features a photorealistic rendering, as well as incredible environment mapping and support for camera effects like noise and motion blur, making virtual content nearly indistinguishable from reality. RealityKit also features incredible animation, physics and spatial audio, and developers can harness the capabilities of RealityKit with the new RealityKit Swift API.

Reality Composer, a powerful new app for iOS, iPadOS and Mac, lets developers easily prototype and produce AR experiences with no prior 3D experience. With a simple drag-and-drop interface and a library of high-quality 3D objects and animations, Reality Composer lets developers place, move and rotate AR objects to assemble an AR experience, which can be directly integrated into an app in Xcode or exported to AR Quick Look.

Bring iPad Apps to Mac

New tools and APIs make it easier than ever to bring iPad apps to Mac. With Xcode, developers can open an existing iPad project and simply check a single box to automatically add fundamental Mac and windowing features, and adapt platform-unique elements like touch controls to keyboard and mouse — providing a huge head start on building a native Mac version of their app.

Mac and iPad apps share the same project and source code, so any changes made to the code translate to both the iPadOS and macOS versions of the app, saving developers valuable time and resources by allowing one team to work on both versions of their app.

With both the Mac and iPad versions of their apps, users will also enjoy the unique capabilities of each platform, including the precision and speed when using their Mac’s keyboard, mouse, trackpad and unique Mac features like Touch Bar.

Core ML and Create ML

Core ML 3 supports the acceleration of more types of advanced, real-time machine learning models. With over 100 model layers now supported with Core ML, apps can use state-of-the-art models to deliver experiences that deeply understand vision, natural language and speech like never before.

For the first time, developers can update machine learning models on-device using model personalization. This cutting-edge technique gives developers the opportunity to provide personalized features without compromising user privacy. With Create ML, a dedicated app for machine learning development, developers can build machine learning models without writing code. Multiple-model training with different datasets can be used with new types of models like object detection, activity and sound classification.

Apple Watch

Developers can now build and design apps for Apple Watch that can work completely independently, even without an iPhone.

Developers can also take advantage of the Apple Neural Engine on Apple Watch Series 4 using Core ML. Incorporating Core ML-trained models into their apps and on-device interpretation of inputs gives users access to more intelligent apps.

A new streaming audio API means users can stream from their favorite third-party media apps with just their Apple Watch. An extended runtime API gives apps additional time to accomplish tasks on Apple Watch while the app is still in the foreground, even if the screen turns off, including access to allowed sensors that measure heart rate, location and motion.

Fast, Easy and Private Sign In Using Apple ID

Sign In with Apple makes it easy for users to sign in to apps and websites using their existing Apple ID. Instead of filling out forms, verifying email addresses or choosing passwords, users simply use their Apple ID to set up an account and start using an app right away, improving the user’s time to engagement.

All accounts are protected with two-factor authentication, making Sign In with Apple a great way for developers to improve the security of their app. It also includes a new anti-fraud feature to give developers confidence that the new users are real people and not bots or farmed accounts.

A new privacy-focused email relay service eliminates the need for users to disclose their personal email address, but still allows them to receive important messages from the app developer. And since Apple does not track users’ app activity or create a profile of app usage, information about the developer’s business and their users remains with the developer.

PencilKit makes it easy for developers to add Apple Pencil support to their apps and includes the redesigned tool palette.

SiriKit adds support for third-party audio apps, including music, podcasts and audiobooks, so developers can now integrate Siri directly into their iOS, iPadOS and watchOS apps, giving users the ability to control their audio with a simple voice command.

MapKit now provides developers a number of new features such as vector overlays, point-of-interest filtering, camera zoom and pan limits, and support for Dark Mode.

In addition to language enhancements targeted at SwiftUI, Swift 5.1 adds Module Stability — the critical foundation for building binary-compatible frameworks in Swift.

Powerful new Metal Device families facilitate code sharing between multiple GPU types on all Apple platforms, while support for the iOS Simulator makes it simple to build Metal apps for iOS and iPadOS.