Apple is developing a load of new accessibility features, including Live Captions and the ability to use an iPhone to detect a door.

Using advancements across hardware, software, and machine learning, people who are blind or low vision can use their iPhone and iPad to navigate the last few feet to their destination with Door Detection; users with physical and motor disabilities who may rely on assistive features like Voice Control and Switch Control can fully control Apple Watch from their iPhone with Apple Watch Mirroring; and the Deaf and hard of hearing community can follow Live Captions on iPhone, iPad, and Mac.

Apple is also expanding support for its industry-leading screen reader VoiceOver with over 20 new languages and locales. These features will be available later this year with software updates across Apple platforms.

“Apple embeds accessibility into every aspect of our work, and we are committed to designing the best products and services for everyone,” said Sarah Herrlinger, Apple’s senior director of Accessibility Policy and Initiatives.

“We’re excited to introduce these new features, which combine innovation and creativity from teams across Apple to give users more options to use our products in ways that best suit their needs and lives.”

Door Detection for Users Who Are Blind or Low Vision

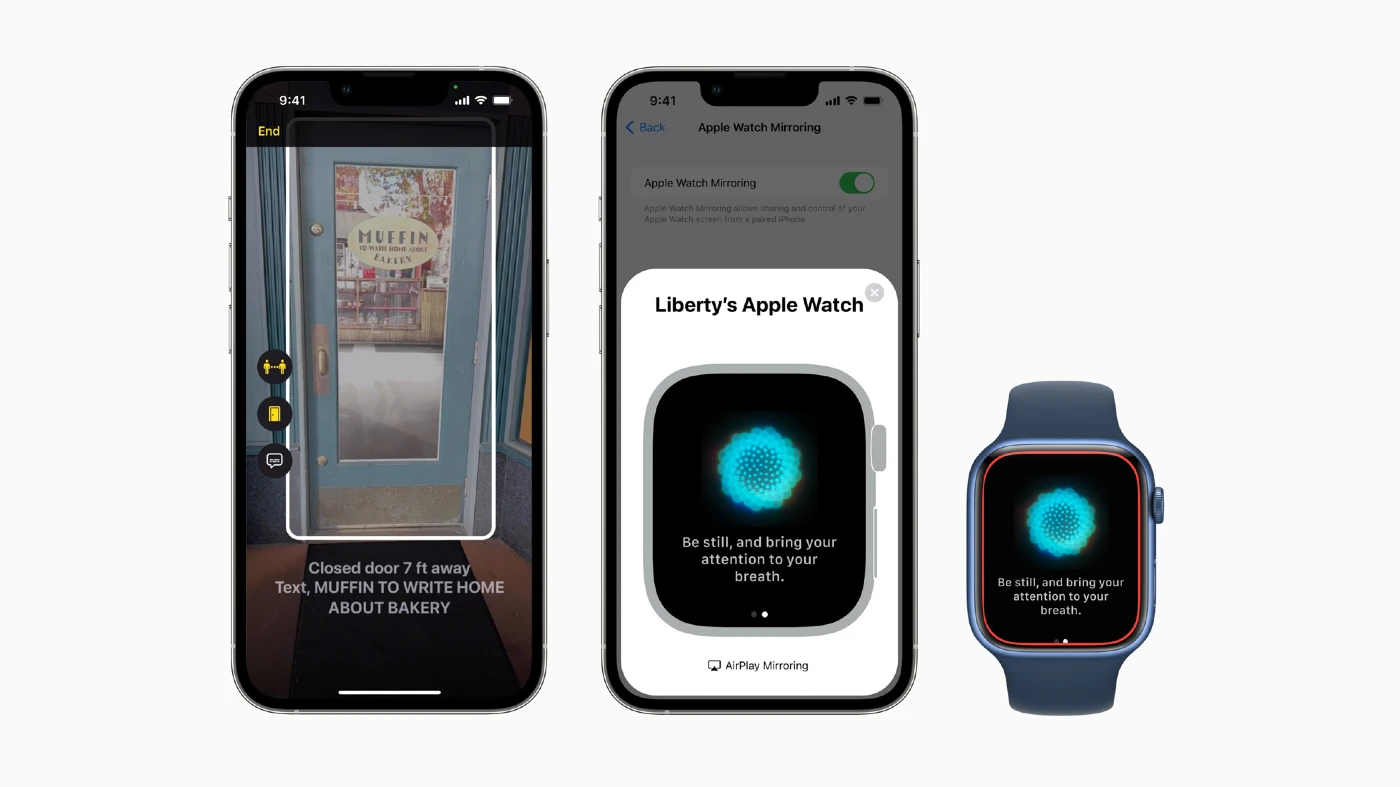

Apple is introducing Door Detection, a cutting-edge navigation feature for users who are blind or low vision. Door Detection can help users locate a door upon arriving at a new destination, understand how far they are from it, and describe door attributes — including if it is open or closed, and when it’s closed, whether it can be opened by pushing, turning a knob, or pulling a handle.

Door Detection can also read signs and symbols around the door, like the room number at an office, or the presence of an accessible entrance symbol. This new feature combines the power of LiDAR, camera, and on-device machine learning, and will be available on iPhone and iPad models with the LiDAR Scanner.

Door Detection will be available in a new Detection Mode within Magnifier, Apple’s built-in app supporting blind and low vision users. Door Detection, along with People Detection and Image Descriptions, can each be used alone or simultaneously in Detection Mode, offering users with vision disabilities a go-to place with customizable tools to help navigate and access rich descriptions of their surroundings.

In addition to navigation tools within Magnifier, Apple Maps will offer sound and haptics feedback for VoiceOver users to identify the starting point for walking directions.

Advancing Physical and Motor Accessibility for Apple Watch

Apple Watch becomes more accessible than ever for people with physical and motor disabilities with Apple Watch Mirroring, which helps users control Apple Watch remotely from their paired iPhone. With Apple Watch Mirroring, users can control Apple Watch using iPhone’s assistive features like Voice Control and Switch Control, and use inputs including voice commands, sound actions, head tracking, or external Made for iPhone switches as alternatives to tapping the Apple Watch display.

Apple Watch Mirroring uses hardware and software integration, including advances built on AirPlay, to help ensure users who rely on these mobility features can benefit from unique Apple Watch apps like Blood Oxygen, Heart Rate, Mindfulness, and more.2

Users can do even more with simple hand gestures to control Apple Watch. With new Quick Actions on Apple Watch, a double-pinch gesture can answer or end a phone call, dismiss a notification, take a photo, play or pause media in the Now Playing app, and start, pause, or resume a workout.

This builds on the technology used in AssistiveTouch on Apple Watch, which gives users with upper body limb differences the option to control Apple Watch with gestures like a pinch or a clench without having to tap the display.

Live Captions Come to iPhone, iPad, and Mac for Deaf and Hard of Hearing Users

For the Deaf and hard of hearing community, Apple is introducing Live Captions on iPhone, iPad, and Mac. Users can follow along more easily with any audio content — whether they are on a phone or FaceTime call, using a video conferencing or social media app, streaming media content, or having a conversation with someone next to them.

Users can also adjust font size for ease of reading. Live Captions in FaceTime attribute auto-transcribed dialogue to call participants, so group video calls become even more convenient for users with hearing disabilities.

When Live Captions are used for calls on Mac, users have the option to type a response and have it spoken aloud in real time to others who are part of the conversation. And because Live Captions are generated on device, user information stays private and secure.

VoiceOver Adds New Languages and More

VoiceOver, Apple’s screen reader for blind and low vision users, is adding support for more than 20 additional locales and languages, including Bengali, Bulgarian, Catalan, Ukrainian, and Vietnamese.

Users can also select from dozens of new voices that are optimized for assistive features across languages. These new languages, locales, and voices will also be available for Speak Selection and Speak Screen accessibility features.

Additionally, VoiceOver users on Mac can use the new Text Checker tool to discover common formatting issues such as duplicative spaces or misplaced capital letters, which makes proofreading documents or emails even easier.

Additional Features

- With Buddy Controller, users can ask a care provider or friend to help them play a game; Buddy Controller combines any two game controllers into one, so multiple controllers can drive the input for a single player.

- With Siri Pause Time, users with speech disabilities can adjust how long Siri waits before responding to a request.

- Voice Control Spelling Mode gives users the option to dictate custom spellings using letter-by-letter input.

- Sound Recognition can be customized to recognize sounds that are specific to a person’s environment, like their home’s unique alarm, doorbell, or appliances.

- The Apple Books app will offer new themes, and introduce customization options such as bolding text and adjusting line, character, and word spacing for an even more accessible reading experience.